There are two basic tasks that are used to scrape web sites: Load a web page to a string. Parse HTML from a web page to locate the interesting bits. Python offers two excellent tools for the above tasks.

2014-04-21T07:00:03Z

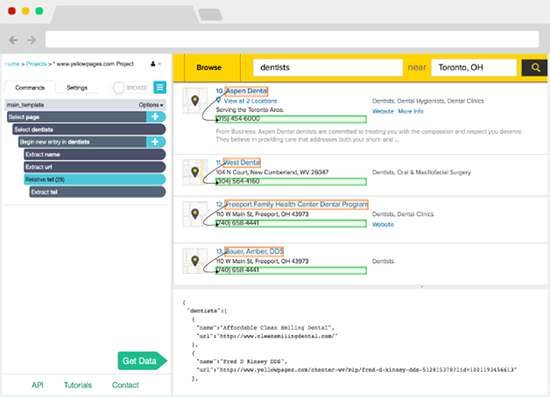

Who is this for: developers who are proficient at programming to build a web. The most popular web scraping extension. Start scraping in minutes. Automate your tasks with our Cloud Scraper. No software to download, no coding needed. Top 5 Free Web Scraping Tools. It would be fair to list some free scraping tools with their pros and cons. Just note that those scraping tools can be separate applications, browser extensions, separate browsers, or just an addon or a library for programming languages. MyDataProvider is a professional data scraping service. Talent Sourcing Tools List. I’ve compiled a list of recommended tools to use for recruiting and talent sourcing. Tools include everything from Browser Extensions, Contact Finding Tools, Web Scrapers, Automation, Search Engines, Databases, AI technology, Email outreach, and much more.

A little over a year ago I wrote an article on web scraping using Node.js. Today I'm revisiting the topic, but this time I'm going to use Python, so that the techniques offered by these two languages can be compared and contrasted.

The Problem

As I'm sure you know, I attended PyCon in Montréal earlier this month. The video recordings of all the talks and tutorials have already been released on YouTube, with an index available at pyvideo.org.

I thought it would be useful to know what are the most watched videos of the conference, so we are going to write a scraping script that will obtain the list of available videos from pyvideo.org and then get viewer statistics from each of the videos directly from their YouTube page. Sounds interesting? Let's get started!

The Tools

There are two basic tasks that are used to scrape web sites:

- Load a web page to a string.

- Parse HTML from a web page to locate the interesting bits.

Python offers two excellent tools for the above tasks. I will use the awesome requests to load web pages, and BeautifulSoup to do the parsing.

We can put these two packages in a virtual environment:

If you are using Microsoft Windows, note that the virtual environment activation command above is different, you should use venvScriptsactivate.

Basic Scraping Technique

The first thing to do when writing a scraping script is to manually inspect the page(s) to scrape to determine how the data can be located.

To begin with, we are going to look at the list of PyCon videos at http://pyvideo.org/category/50/pycon-us-2014. Inspecting the HTML source of this page we find that the structure of the video list is more or less as follows:

So the first task is to load this page, and extract the links to the individual pages, since the links to the YouTube videos are in these pages.

Loading a web page using requests is extremely simple:

Free Web Scraping Tools

That's it! After this function returns the HTML of the page is available in response.text.

The next task is to extract the links to the individual video pages. With BeautifulSoup this can be done using CSS selector syntax, which you may be familiar if you work on the client-side.

To obtain the links we will use a selector that captures the <a> elements inside each <div> with class video-summary-data. Since there are several <a> elements for each video we will filter them to include only those that point to a URL that begins with /video, which is unique to the individual video pages. The CSS selector that implements the above criteria is div.video-summary-data a[href^=/video]. The following snippet of code uses this selector with BeautifulSoup to obtain the <a> elements that point to video pages:

Since we are really interested in the link itself and not in the <a> element that contains it, we can improve the above with a list comprehension:

And now we have a list of all the links to the individual pages for each session!

The following script shows a cleaned up version of all the techniques we have learned so far:

If you run the above script you will get a long list of URLs as a result. Now we need to parse each of these to get more information about each PyCon session.

Scraping Linked Pages

Web Scraping Tools Open Source

The next step is to load each of the pages in our URL list. If you want to see how these pages look, here is an example: http://pyvideo.org/video/2668/writing-restful-web-services-with-flask. Yes, that's me, that is one of my sessions!

From these pages we can scrape the session title, which appears at the top. We can also obtain the names of the speakers and the YouTube link from the sidebar that appears on the right side below the embedded video. The code that gets these elements is shown below:

A few things to note about this function:

- The URLs returned from the scraping of the index page are relative, so the

root_urlneeds to be prepended. - The session title is obtained from the

<h3>element inside the<div>with idvideobox. Note that[0]is needed because theselect()call returns a list, even if there is only one match. - The speaker names and YouTube links are obtained in a similar way to the links in the index page.

Now all that remains is to scrape the views count from the YouTube page for each video. This is actually very simple to write as a continuation of the above function. In fact, it is so simple that while we are at it, we can also scrape the likes and dislikes counts:

The soup.select() calls above capture the stats for the video using selectors for the specific id names used in the YouTube page. But the text of the elements need to be processed a bit before it can be converted to a number. Consider an example views count, which YouTube would show as '1,344 views'. To remove the text after the number the contents are split at whitespace and only the first part is used. This first part is then filtered with a regular expression that removes any characters that are not digits, since the numbers can have commas in them. The resulting string is finally converted to an integer and stored.

To complete the scraping the following function invokes all the previously shown code:

Parallel Processing

The script up to this point works great, but with over a hundred videos it can take a while to run. In reality we aren't doing so much work, what takes most of the time is to download all those pages, and during that time the script is blocked. It would be much more efficient if the script could run several of these download operations simultaneously, right?

Back when I wrote the scraping article using Node.js the parallelism came for free with the asynchronous nature of JavaScript. With Python this can be done as well, but it needs to be specified explicitly. For this example I'm going to start a pool of eight worker processes that can work concurrently. This is surprisingly simple:

The multiprocessing.Pool class starts eight worker processes that wait to be given jobs to run. Why eight? It's twice the number of cores I have on my computer. While experimenting with different sizes for the pool I've found this to be the sweet spot. Less than eight make the script run slower, more than eight do not make it go faster.

The pool.map() call is similar to the regular map() call in that it invokes the function given as the first argument once for each of the elements in the iterable given as the second argument. The big difference is that it sends all these to run by the processes owned by the pool, so in this example eight tasks will run concurrently.

The time savings are considerable. On my computer the first version of the script completes in 75 seconds, while the pool version does the same work in 16 seconds!

The Complete Scraping Script

The final version of my scraping script does a few more things after the data has been obtained.

I've added a --sort command line option to specify a sorting criteria, which can be by views, likes or dislikes. The script will sort the list of results in descending order by the specified field. Another option, --max takes a number of results to show, in case you just want to see a few entries from the top. Finally, I have added a --csv option which prints the data in CSV format instead of table aligned, to make it easy to export the data to a spreadsheet.

The complete script is available for download at this location: https://gist.github.com/miguelgrinberg/5f52ceb565264b1e969a.

Below is an example output with the 25 most viewed sessions at the time I'm writing this:

Conclusion

I hope you have found this article useful as an introduction to web scraping with Python. I have been pleasantly surprised with the use of Python, the tools are robust and powerful, and the fact that the asynchronous optimizations can be left for the end is great compared to JavaScript, where there is no way to avoid working asynchronously from the start.

Miguel

Hello, and thank you for visiting my blog! If you enjoyed this article, please consider supporting my work on this blog on Patreon!

60 comments

#1Weihong Guan said 2014-04-22T00:33:58Z

#2Fraser Gorrie said 2014-04-22T16:16:47Z

#3Miguel Grinberg said 2014-04-22T16:37:37Z

#4Sheila said 2014-04-24T18:33:27Z

#5Miguel Grinberg said 2014-04-24T18:45:50Z

#6Rodrigo Delduca said 2014-04-28T16:06:15Z

#7Martin Betz said 2014-06-03T16:21:27Z

#8Meysam said 2014-06-28T07:52:50Z

#9Miguel Grinberg said 2014-07-02T18:28:21Z

#10jeffrey said 2014-07-09T06:44:42Z

#11Tao said 2014-07-09T11:17:56Z

#12Miguel Grinberg said 2014-07-10T04:00:21Z

#13Miguel Grinberg said 2014-07-10T04:00:52Z

#14johnnycakes79 said 2014-07-14T00:15:24Z

#15David said 2014-07-15T12:33:22Z

#16David said 2014-07-31T03:36:13Z

#17Miguel Grinberg said 2014-08-02T04:57:42Z

#18Web Scraping said 2014-08-02T09:11:04Z

#19Fara said 2014-08-07T10:02:10Z

#20Miguel Grinberg said 2014-08-08T14:50:01Z

#21Alfredo Conetta said 2014-08-25T22:10:31Z

#22Rd Hilman Hermarian said 2014-11-10T17:59:42Z

#23Xiaobo said 2014-11-10T21:21:17Z

#24Anubhav Aggarwal said 2014-11-11T07:52:01Z

#25Miguel Grinberg said 2014-11-11T19:41:06Z

Leave a Comment

Web scraping, web crawling, html scraping, and any other form of web data extraction can be complicated. Between obtaining the correct page source, to parsing the source correctly, rendering javascript, and obtaining data in a usable form, there’s a lot of work to be done. Different users have very different needs, and there are tools out there for all of them, people who want to build web scrapers without coding, developers who want to build web crawlers to crawl large sites, and everything in between. Here is our list of the 10 best web scraping tools on the market right now, from open source projects to hosted SAAS solutions to desktop software, there is sure to be something for everyone looking to make use of web data!

1. Scraper API

Website: https://www.scraperapi.com/

Who is this for: Scraper API is a tool for developers building web scrapers, it handles proxies, browsers, and CAPTCHAs so developers can get the raw HTML from any website with a simple API call.

Why you should use it: Scraper API is a tool for developers building web scrapers, it handles proxies, browsers, and CAPTCHAs so developers can get the raw HTML from any website with a simple API call. It doesn’t burden you with managing your own proxies, it manages its own internal pool of over a hundreds of thousands of proxies from a dozen different proxy providers, and has smart routing logic that routes requests through different subnets and automatically throttles requests in order to avoid IP bans and CAPTCHAs. It’s the ultimate web scraping service for developers, with special pools of proxies for ecommerce price scraping, search engine scraping, social media scraping, sneaker scraping, ticket scraping and more! If you need to scrape millions of pages a month, you can use this form to ask for a volume discount.

2. ScrapeSimple

Website: https://www.scrapesimple.com

Who is this for: ScrapeSimple is the perfect service for people who want a custom scraper built for them. Web scraping is made as simple as filling out a form with instructions for what kind of data you want.

Why you should use it: ScrapeSimple lives up to its name with a fully managed service that builds and maintains custom web scrapers for customers. Just tell them what information you need from which sites, and they will design a custom web scraper to deliver the information to you periodically (could be daily, weekly, monthly, or whatever) in CSV format directly to your inbox. This service is perfect for businesses that just want a html scraper without needing to write any code themselves. Response times are quick and the service is incredibly friendly and helpful, making this service perfect for people who just want the full data extraction process taken care of for them.

3. Octoparse

Easy Web Scraping Tools Online

Website: https://www.octoparse.com/

Who is this for: Octoparse is a fantastic tool for people who want to extract data from websites without having to code, while still having control over the full process with their easy to use user interface.

Why you should use it: Octoparse is the perfect tool for people who want to scrape websites without learning to code. It features a point and click screen scraper, allowing users to scrape behind login forms, fill in forms, input search terms, scroll through infinite scroll, render javascript, and more. It also includes a site parser and a hosted solution for users who want to run their scrapers in the cloud. Best of all, it comes with a generous free tier allowing users to build up to 10 crawlers for free. For enterprise level customers, they also offer fully customized crawlers and managed solutions where they take care of running everything for you and just deliver the data to you directly.

4. ParseHub

Website: https://www.parsehub.com/

Who is this for: Parsehub is an incredibly powerful tool for building web scrapers without coding. It is used by analysts, journalists, data scientists, and everyone in between.

Why you should use it: Parsehub is dead simple to use, you can build web scrapers simply by clicking on the data that you want. It then exports the data in JSON or Excel format. It has many handy features such as automatic IP rotation, allowing scraping behind login walls, going through dropdowns and tabs, getting data from tables and maps, and much much more. In addition, it has a generous free tier, allowing users to scrape up to 200 pages of data in just 40 minutes! Parsehub is also nice in that it provies desktop clients for Windows, Mac OS, and Linux, so you can use them from your computer no matter what system you’re running.

5. Scrapy

Website: https://scrapy.org

Who is this for: Scrapy is a web scraping library for Python developers looking to build scalable web crawlers. It’s a full on web crawling framework that handles all of the plumbing (queueing requests, proxy middleware, etc.) that makes building web crawlers difficult.

Why you should use it: As an open source tool, Scrapy is completely free. It is battle tested, and has been one of the most popular Python libraries for years, and it’s probably the best python web scraping tool for new applications. It is well documented and there are many tutorials on how to get started. In addition, deploying the crawlers is very simple and reliable, the processes can run themselves once they are set up. As a fully featured web scraping framework, there are many middleware modules available to integrate various tools and handle various use cases (handling cookies, user agents, etc.).

6. Diffbot

Website: https://www.diffbot.com

Easy Web Scraping Tools Free

Who is this for: Enterprises who who have specific data crawling and screen scraping needs, particularly those who scrape websites that often change their HTML structure.

Why you should use it: Diffbot is different from most page scraping tools out there in that it uses computer vision (instead of html parsing) to identify relevant information on a page. This means that even if the HTML structure of a page changes, your web scrapers will not break as long as the page looks the same visually. This is an incredible feature for long running mission critical web scraping jobs. While they may be a bit pricy (the cheapest plan is $299/month), they do a great job offering a premium service that may make it worth it for large customers.

7. Cheerio

Website: https://cheerio.js.org

Who is this for: NodeJS developers who want a straightforward way to parse HTML. Those familiar with jQuery will immediately appreciate the best javascript web scraping syntax available.

Why you should use it: Cheerio offers an API similar to jQuery, so developers familiar with jQuery will immediately feel at home using Cheerio to parse HTML. It is blazing fast, and offers many helpful methods to extract text, html, classes, ids, and more. It is by far the most popular HTML parsing library written in NodeJS, and is probably the best NodeJS web scraping tool or javascript web scraping tool for new projects.

8. BeautifulSoup

Website: https://www.crummy.com/software/BeautifulSoup/

Who is this for: Python developers who just want an easy interface to parse HTML, and don’t necessarily need the power and complexity that comes with Scrapy.

Why you should use it: Like Cheerio for NodeJS developers, Beautiful Soup is by far the most popular HTML parser for Python developers. It’s been around for over a decade now and is extremely well documented, with many web parsing tutorials teaching developers to use it to scrape various websites in both Python 2 and Python 3. If you are looking for a Python HTML parsing library, this is the one you want.

9. Puppeteer

Website: https://github.com/GoogleChrome/puppeteer

Who is this for: Puppeteer is a headless Chrome API for NodeJS developers who want very granular control over their scraping activity.

Why you should use it: As an open source tool, Puppeteer is completely free. It is well supported and actively being developed and backed by the Google Chrome team itself. It is quickly replacing Selenium and PhantomJS as the default headless browser automation tool. It has a well thought out API, and automatically installs a compatible Chromium binary as part of its setup process, meaning you don’t have to keep track of browser versions yourself. While it’s much more than just a web crawling library, it’s often used to scrape website data from sites that require javascript to display information, it handles scripts, stylesheets, and fonts just like a real browser. Note that while it is a great solution for sites that require javascript to display data, it is very CPU and memory intensive, so using it for sites where a full blown browser is not necessary is probably not a great idea. Most times a simple GET request should do the trick!

10. Mozenda

Website: https://www.mozenda.com/

Who is this for: Enterprises looking for a cloud based self serve webpage scraping platform need look no further. With over 7 billion pages scraped, Mozenda has experience in serving enterprise customers from all around the world.

Why you should use it: Mozenda allows enterprise customers to run web scrapers on their robust cloud platform. They set themselves apart with the customer service (providing both phone and email support to all paying customers). Its platform is highly scalable and will allow for on premise hosting as well. Like Diffbot, they are a bit pricy, and their lowest plans start at $250/month.

Honorable Mention 1. Kimura

Website: https://github.com/vifreefly/kimuraframework

Who is this for: Kimura is an open source web scraping framework written in Ruby, it makes it incredibly easy to get a Ruby web scraper up and running.

Why you should use it: Kimura is quickly becoming known as the best Ruby web scraping library, as it’s designed to work with headless Chrome/Firefox, PhantomJS, and normal GET requests all out of the box. It’s syntax is similar to Scrapy and developers writing Ruby web scrapers will love all of the nice configuration options to do things like set a delay, rotate user agents, and set default headers.

Honorable Mention 2. Goutte

Website: https://github.com/FriendsOfPHP/Goutte

Who is this for: Goutte is an open source web crawling framework written in PHP, it makes it super easy extract data from the HTML/XML responses using PHP.

Why you should use it: Goutte is a very straight forward, no frills framework that is considered by many to be the best PHP web scraping library, as it’s designed for simplicity, handling the vast majority of HTML/XML use cases without too much additional cruft. It also seamlessly integrates with the excellent Guzzle requests library, which allows you to customize the framework for more advanced use cases.

The open web is by far the greatest global repository for human knowledge, there is almost no information that you can’t find through extracting web data. Because web scraping is done by many people of various levels of technical ability and know how, there are many tools available that service everyone from people who don’t want to write any code to seasoned developers just looking for the best open source solution in their language of choice.

Hopefully, this list of tools has been helpful in letting you take advantage of this information for your own projects and businesses. If you have any web scraping jobs you would like to discuss with us, please contact us here. Happy scraping!